any game at 60fps

the game

Any true 2D game, because the console was designed for 2D games. The SuperFX chip used for Star Fox was also used for Yoshi’s Island, which did maintain 60Hz.

Yeah? Well that’s like 40 triangles!

And this required an extra in-cartridge hardware to render it

Do a bagel roll.

I can hear this image. Starfox OST is the shit.

What’s neat is that you don’t remember old games looking like this. You remember them looking great, because your imagination filled in the gaps.

To be fair, the soft edges of CRTs were much more forgiving when viewing chunky pixels.

deleted by creator

I’m shocked that a meme creator that used Soldier Boy as the Glorious Past had nostalgia goggles on!

Ok, well, not that shocked, but honestly I don’t remember it happening either.

The cart sequence in the Armored Armadillo stage of Megaman X drops to like 1FPS if you get enough sprites on the screen.

deleted by creator

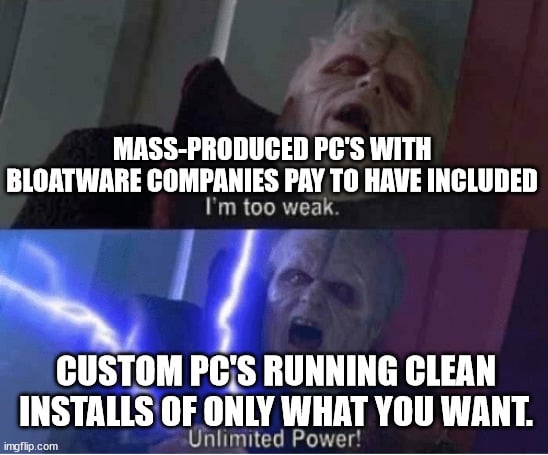

Linux helps enormously to make older PCs useable

We get it. Linux is the greatest thing ever. Good grief.

Who would win: Bloatware on mass produced pre-builts, or a thumb drive with Windows Media Creation kit?

SNES resolution was 256 x 224 with a 15 bit colour. You’re using your chrome on a 3,840 x 2,160 screen with a 32 bit colour. That’s 308x more data per frame to render. You should be really impressed that in a span of just three decades we got 300x improvement in performance.

It’s almost like having double frame buffers for 720p or larger, 16 bit PCM audio, memory safe(ish) languages, streaming video, security sandboxes, rendering fully textured 3d objects with a million polygons in real time, etc. are all things that take up cpu and ram.

I didn’t realize web browsing in Chrome required fully textured 3D objects. Not to mention playing 720p video with PCM audio in a separate app doesn’t grind everything to a halt.

well the gpu doesn’t care if it’s 2d or 3d, but you are rendering a whole bunch of textured triangles… (separated into tiles for fast partial or multithreaded re-rendering), and also just-in-time rasterizing fonts, running a complex constraint solver to lay out the ui, parsing 3 completely separate languages, communicating using multiple complex network protocols, doing a whole bunch interprocess communication in order to sandbox stuff

Everything, including Chrome, is rendered as a 3D object these days. It’s a lot faster, but takes more RAM.

There are shared libraries that have to be loaded regardless of you having a tab that uses them or not.

That’s not how dynamically loading libraries work. They load and unload as needed.

I will run any game at 60fps if it was designed for this exact machine that does nothing but play games designed for it and is also 16-bit with pixel graphics and also has low quality audio and also fits in the memory of the cartridge

Are you talking about games? There, it’s mostly textures.

Web, that’s a whole other story, why it uses so much RAM.

WebGL means the browser has access to the GPU. Also, the whole desktop tends to be rendered as a 3D space these days. It makes things like scaling and blur effects easier, among other benefits.

tends to be rendered as a 3D space

Good to know, thanks.

Someone clearly didn’t play SNES games on the original hardware.

Yeah, slowdowns, choppy graphics, and other glitches to be had.

“Any SNES game” is pretty much just F-ZERO.

Actually, fun fact: F-ZERO runs at a locked 60FPS for every single release. SFC, N64, GBA and GC. It’s some really impressive stuff for N64.

Idk if it’s true but I’ve been told no Kerby game has ever been updated

I have a ThinkPad T61, a laptop from 2007, with only 4 GB of RAM. I can open Firefox with 10 tabs, including a Youtube video at 480p, and still have 1GB of RAM left. Yet people act like 8GB is unusable these days.

Is it windows or Linux based?

I think it’s sarcasm based

No? I’m being serious

10 tabs and a single video playing at 480p is usable to you? ._.

Linux

Firefox yeah I could see that. If you were running Chromium you’d be having some trouble.

Personally 8gb is more comfortable, but if 4gb works for you, then who am I to tell you anything different?

I hope that you have an SSD in there at least!

If we need to get into this kind of debate; may i remember everyone that the computer that brought humanity on the moon had 2k of ram

Well yeah that computer didn’t had to hold Aldrin’s porn collection in memory.

He brought the physicals!

That was a lot of RAM for the time though.

And for several years that one program was consuming the entire national supply of integrated circuits.

I watched the moon landing at 60fps on a TV that cost me $80.

Why can’t I play max resolution BG3 at this framerate?

Because the moon landing was rendered by the TV station and your TV only showed the end result. You can do the same with GeForce NOW or other streaming service.

And a 2 MHz processor lol

NTSC is 30 fps.

The console ran at 60 on NTSC, and 50 for PAL. Divide by two to get the standard.

Cuz interlacing

The Super Nintendo’s interlaced video mode was basically never ever used. It could output 60Hz and more than often did.

Only some games had limited framerate for various reasons, such as Another World being limited by cartridge ram or Star Fox being limited by the power of the SuperFX. Yoshis Island also used the SuperFX and wasn’t limited like Star Fox was. Occasionally there was slowdown if a developer put too much on screen at once, but these were momentary and similar to today when a game hitches while trying to load a new area during gameplay.

At 480i. SNES used 240p, which is technically not standard NTSC, but compatible. Nintendo called this “double strike”, since each field would display in the same location.

Interesting.

NTSC is 59.94hz ???

It is 59.94 fields per second, translating into 29.97 FPS. Interlaced video is fun. Reason why it’s not a round 60 or 30 FPS is due to maintaining compatibility with black and white sets.

240p uses each field as a frame, though, while still maintaining compatibility with NTSC. This is what most consoles pre-6th generation uses (same with PAL, but 288p at 50 FPS)

Interlaced

Kinda but also kinda 60

Interlacing is trash

Interlacing is native on CRT displays, which is what SNES was made for.

Interlacing is native to US broadcast TV. Crt’s don’t have to be interlaced. Computer CRT’s were rarely interlaced.

Okay fine, be particular and ignore the context. Interlacing is native on CRT displays WHEN DISPLAYING NTSC OR PAL, which is what SNES was made for.

I’m just being nitpicky because you are using CRT interchangeably with Television. CRT’s are used in TV’s but aren’t interlaced unless the circuitry around them sends interlaced. So no, interlacing is not native on CRT’s when receiving an interlaced signal. If I plugged a Nintendo into my old ViewSonic CRT, I wouldn’t get a signal because it didn’t support NTSC interlaced input.

It’s like saying interlacing is native on LCDs. LCD TVs are interlaced, not LCDs.

I’m just being nitpicky because you are using CRT interchangeably with Television.

That was intentional on my part because of the audience and good communication. You’re technically correct, but without a paragraph of tangential and irrelevant explanation your audience isn’t going to understand you. Modern parlance usage of “television” isn’t the CRT appliance, its any appliance that shows the moving pictures and sound content of television programming. If you walk into any store today and buy a TV, you’re going to get an LCD, AMOLED, or quantum dot display. None of those are CRTs, yet everyone born after about 2002 will associate a TV or Television with a flat panel non-CRT display.

So no, interlacing is not native on CRT’s when receiving an interlaced signal.

And in nobody’s mind was the vision of plugging a SNES into a computer monitor CRT. You introduced that idea only to show how its wrong. You win at pedantry, but lose at communication.

If someone says to you “I’m watching TV”, do you poke your head around the back of the unit to make sure it has a tuner in it and if it doesn’t you quip back to correct them “You’re not actually watching a TV, you’re watching a monitor. A TV requires a tuner, which this unit does not have, making it a monitor, not a TV”?

Yes, hence my comments.

Even interlaced it’s still 60 frames per second.

Sure they were technically 30 “fields” per second, but most games updated 60 times a second, even SMB on NES. You only saw one half of what the internal console rendered which is an output issue, not a rendering one.

Add on 480p and you get both 60 frames and 60 fields per second

“any game”

'* Except Star Fox.

(And I guess Stunt Race FX, too.)

And many parts of Gradius 3.

3 pixels on the screen that you have to squint at and use your imagination.

Hey, my imagination was pretty good in 1990!

Fab Five Freddy told me everybody’s fly

You DO know that 30 fps wasn’t a thing back then, right?

It absolutely was. Many games would update the display only on every other frame; the SNES also had an interlaced graphics mode that was 30hz.

Huh, interesting, still, funny how a GBA is more powerful than the SNES, lots of respect for both though, even if i didn’t got to try them before